Technology

-

No More Working From Home

Its been almost ten years since I posted anything; I guess things got even busier than I realized! Fortunately, I now have a little more time now that I’m not working from home. Or from the office! I greatly enjoyed my time at Google so it was a tough decision to leave, but I’ll save that for Linkedin, maybe. For now, I thought it’d be fun to share the final state of my home office.

I’ve always been the type to do a little work from home, and the Chrome team was highly distributed to begin with, so in many respects we were set up for the pandemic as well as we could be. The one exception is that my home office was in a windowless room in the basement, with a single bulb overhead fixture, so lighting and thus video calls were terrible by default. Fortunately, that’s a fixable problem, though I may have gone a little overboard with the solution:

On the left is my “normal” desk, essentially the same as it’s always been except during my Google time a KVM allowed switching between my personal Windows box, and a corporate Chrome OS machine. On the right is the final iteration of the setup I used for videoconferencing.

Lights, Camera, Action!

A few Google Home integrated smart plugs switch between the default lighting configuration above, and VC mode:

The lights follow a suggestions online for a standard 3-point lighting setup, with a large key light, and smaller fill and (overhead) back lights. Many professionals have books in the background, so you know you’re talking to someone educated. So mine is almost all videogame paraphenalia – no point trying to fool anyone!

From the inside, it looks like a weird three-screen setup:

The trick is that the middle screen is actually the reflection of a a flat display that’s facing up; it’s reflecting off beamsplitter glass that has a camera behind it. As a result, when there’s a meeting in progress, the person you’re talking to appears in front of the camera – so they see you looking directly at them (whereas before, with a big monitor, it really seemed like I was looking off into space when I was looking at their face on screen).

The Tech Pieces

At work I gave the disclaimer that I didn’t spend any of Google’s money on this setup, since it’s frankly a little ridiculous given that a good webcam and key light is 90% of what you need. That said, the setup uses the following:

- Camera: Nikon Z7 II + Nikon 24-70mm f/2.8G (via FTZ adapter), using HDMI out. Overkill and not even optimal – the Z6 II would is cheaper and better for video – but it’s the camera I had. Sadly while the Z7 II has USB power, it can’t run continuously on it, so I still use a 3rd party EN-EL15 replacement that runs on AC power.

- Mirror: This is an 18″ x 18″ piece of 60/40 beamsplitter glass, held in place with a Nikon 7070 binocular window mount, with a 15.4″ UPERFECT flat panel display (better than others I tried as it’s 400 nit brightness helps since only half the light is reflected by the glass). The final ingredient was an HDMI left/right inverter (which was hard to find – I finally bought via Newegg as I wasn’t able to purchase it more directly via Alibaba).

- Microphone: Rode PodMic. I’d been using a Blue Yeti USB – which is great, but doesn’t do analog audio (cranking the volume and using it’s headphone port is way too noisy). I connected it via the inexpensive Pyle PMUX9 audio interface, only to discover it needs a ton of gain, and didn’t natively support 48v phantom power, so I was forced to add a Cloudlifter.

- A/V Sync: Blackmagic ATEM Mini Pro. Initially I’d set this up to easily switch between showing what’s on my screen vs. the camera feed, but this doesn’t always interact great with videoconferencing software (which will make screen share content large for all participants, but can’t always be told to do the same for a camera feed). It was also supposed to do USB capture – but unfortunately, the version I have is USB2 only (which reduces quality) and had compatibility issues with Chrome OS. So now it’s sole purpose is to combine the video from the camera with the audio from the mic, with a ~2 frame audio delay for A/V sync purposes.

- Capture: The tried-and-true Elgato Camlink (non-4K) allows the HDMI feed to be used in realtime as a webcam – and works fine on Chrome OS.

- Lights: The key light is a Neewer CB60B (70W) with a 34″ Glow softbox. The fill light is a Neewer 660B with collapsible softbox; the back light is a GVM 800D-RGB. They all support color temperature adjustment, and have been really reliable even for days with 8+ hours of continuous use.

- PC: I use an Asus Chromebox 4; while less flexible than a Windows box (e.g. for USB-based control of my camera, or using virtual camera drivers), like most Chrome OS devices it can be enterprise enrolled – so that I can use it securely for work, under Google management – despite being personally purchased.

Much of this complexity would be eliminated using a much less expensive camera with a decent mic input (the Z7 II that I already owned is great in other respects, but has a poor mic input), and usable USB webcam performance. Then you’d just need the lights (there have been Black Friday deals for $200 bundles that give you a 3-point setup), and the mirror. But it was definitely fun to tinker with this setup over the years, and while it’s certainly not Google Beam it is still much cheaper!

-

Learning About Color

Over the past half year or so, understanding color and how it’s handled digitally became much more important to me. I even understand how to spell it the American way now! Some of this was related to my work, where handling video content in different color spaces is becoming increasingly important, especially with the emergence of new standards around UHD Premium and HDR. However, for personal use – choosing a monitor, editing photos, sharing them – an improved understanding has helped significantly as there’s an unfortunate and unsettling set of tradeoffs in digital color handling. I’m still no expert, but here’s what I’ve learned that’s relevant in personal use.

Color Fundamentals

We learned back in elementary school that your eyes have rods and cones, that the cones allow us to see color, and that we can roughly see light with wavelengths between about 400nm (violet) and 700nm (red). We’ve got three types of cones – typically called Short, Middle, and Long based on the wavelengths they are most sensitive to. And while color in reality is pretty complex – an object will emit or reflect light at every wavelength we can see, just at a different level for each wavelength – all that matters to our brain is how much each cone in our eye responds to a given color, an effect called metamerism. Effectively, our cones turn a complex blend of light at different wavelengths into a set of three “tristimulus” values – in much the way that our tongue turns fancy food into measurements of how sweet, sour, salty, bitter, and umami something is.

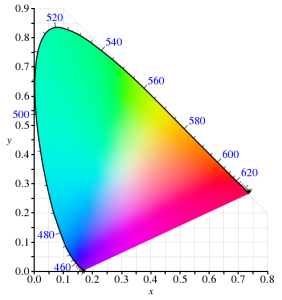

In 1931, the CIE helpfully built a model for quantitatively representing any color that humans are capable of perceiving. It used a model similar to our cones for transforming any visible color spectrum into a set of three values (XYZ). The model is defined so that Y is close to how we perceive luminance (brightness), and normalized to create x and y values that reflect the ratios of X, Y, and Z. and thus, the color of something independent of how bright it is. Roughly, this is the CIE 1931 color space (from Wikipedia):

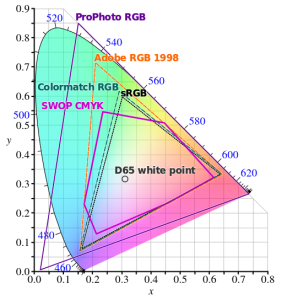

Since the very first color TVs, our technology has taken advantage of metamerism to use just three colors – typically red, green, and blue – to reproduce a much wider range of perceived colors. Good thing we don’t have 16 cones like the mantis shrimp, or a mix of red and green would seem nothing at all like yellow – forcing us to build many more primary colors into a display. Despite this, reproducing color accurately is still hard. First, there’s the shape above, which I heard cleverly referred to as “that darned curve that every engineer wishes was a triangle”; it reflects the nature of the cones in our eye, which make it impossible to reproduce some colors (e.g. pure 520nm light) with any combination of other colors. Second, while very pure color sources in our displays allow us to cover more of the visible range, current technology can’t easily produce pure colors. So to make our lives easier, we define color spaces that cover a subset of human vision, and are defined by three color primaries that we can produce (image from Wikipedia):

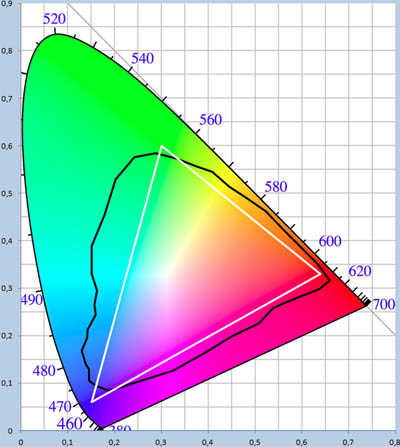

That small triangle in the middle that covers roughly 36% of what we can see? That’s the sRGB color space, and it is the dominant color space in almost all displays, digital photos, and digital video you’ll find today. Video standards like Rec.709 use the same color primaries; as far as I know, references to “NTSC” refer to Rec.709 and thus to the same colors. At first glance, sRGB seems depressingly small relative to our vision, to the point you’d expect displays to look terrible. Fortunately, much of what we can technically perceive doesn’t occur in our natural world. The color of almost all physical objects are based on what wavelengths of light they absorb, and no earthly substance can absorb all but a single narrow wavelength. The result is Pointer’s Gamut, which the linked article describes; it’s only modestly bigger than sRGB, and is shown via the black line below:

So when you look at a vivid alien world in Avatar and it seems like nothing you’ve seen on earth, it probably wouldn’t really look like that if it was real either :).

TVs, Displays, and Calibration

Limited though sRGB seems, most displays today are even more limited. When LCDs began to dominate over CRTs, they were only capable of covering roughly 70% of the sRGB color space – 25% of what you can theoretically perceive. The Dell WFP3007 I used until recently only had 72% sRGB coverage. Most budget monitors are also still at this coverage level – such as the HP 22cwa (the #1 monitor on Amazon), and essentially everything else in the sub-$300 category.

However, the last few years have seen much of the $500+ segment move to providing 100% sRGB coverage, and while still not that common, there are an increasing number of non-exorbitant “wide gamut” options that go beyond sRGB – covering some or all of larger color spaces such as AdobeRGB or DCI-P3 (which are almost 50% larger than sRGB). The new UHD Premium specification for TVs requires at least 90% coverage of DCI-P3, meaning we’ll see more and more displays with wide gamut support.

If you’re looking for a display or TV, should you always get as wide gamut a display as you can? Unfortunately, it depends. It definitely makes sense to ensure that you’ve at least got 100% sRGB coverage – this will do a good job rendering all content that’s out there, and most disc players, cable/satellite boxes, game consoles, PCs will simply assume that your display reproduces sRGB. But when you go beyond 100% sRGB – perhaps to a display that can show the full AdobeRGB or DCI-P3 color spaces – most content will appear over-saturated by default. Sure, you can turn down saturation or use “sRGB mode” if the display offers this, but in that case why get a wide gamut display at all?

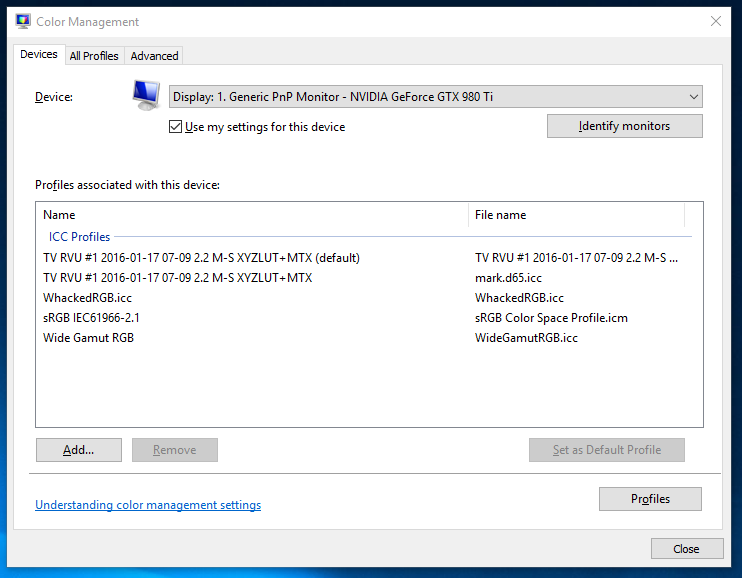

For computer displays, accurate yet wide color requires two things: installing or creating an appropriate color profile for your display, and use of color managed applications. On Windows, it is up to you to manually install a color profile for your display. On Windows 10, Display Settings > Advanced Display Settings > Display Adapter Properties > Color Management… (in the Color Management tab) will open the Color Management tool that allows you to add, remove, and choose the default color profile:

Of course, this assumes your display manufacturer provides an color profile (normally a “.icc” file) for your display. If it doesn’t, you can pick the closest standard color space. However, the better option if you really want the most accurate color is to build a profile for your display. This beats a manufacturer-supplied one, as every display is different and changes over time. Plus, the process of building a profile can help you optimize your display settings. To do this, you’ll need a colorimeter and calibration software that works with it. I bought the X-Rite i1Display Pro, though the less expensive Colormunki Display is probably equivalent. These are USB peripherals that sit on top of your screen and tell your computer what color is actually being displayed (photo is X-Rite’s):

One word of advice: if you do go with X-Rite, don’t install their software. It’s truly terrible! In it’s default mode of operation, it didn’t help at all with adjusting settings on the display, created a ridiculous profile that attempted to do all white balance and output brightness adjustments on the computer by reducing the output levels of the RGB channels accordingly. This looked awful and created obvious clipping and banding in images. Worse, it somehow installed at the GPU level somehow, making it really unclear how to remove or reset this. Eventually, uninstalling their software, fiddling with some things, and rebooting got me back to the much better state in which I started. Fortunately, vastly better open source, DisplayCAL (and the underlying Argyll CMS software it uses) is available. It’s free, but it worked so well for me that I made a donation to contribute to its ongoing development.

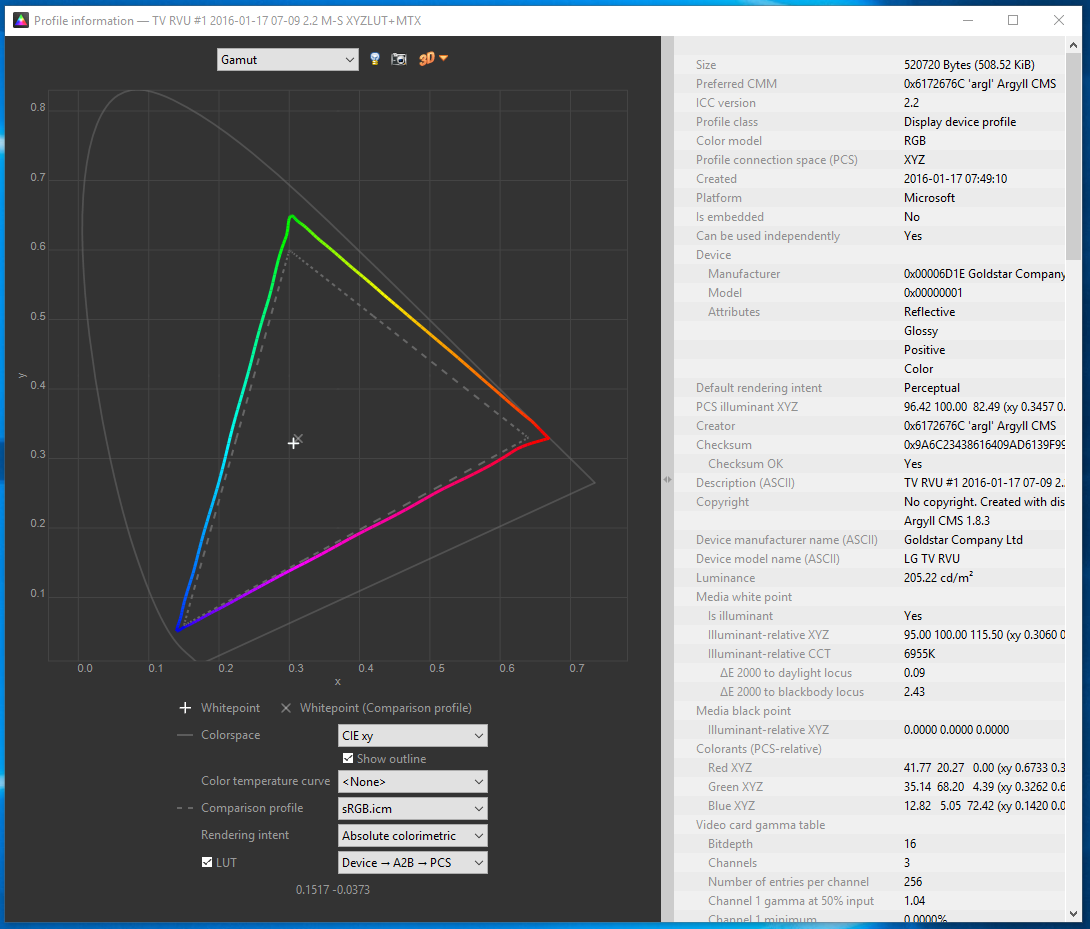

One of the goals of calibration is to achieve a particular white point. This is important for any photo editing, or else once you get things looking right on your screen, the white balance will look off everywhere else. Calibrating to a 6500K white point will usually make sense. The DisplayCAL software was particularly helpful for getting this close using controls on the actual display, so that the connection between your computer and display can still use the full available range. Otherwise, creating a profile is surprisingly automatic, and at the end of the day, I had an accurate (hopefully!) .icc profile for my LG EG9600, and a better understanding of it’s coverage relative to sRGB:

Cameras and File Formats

What’s next after calibrating a wide-gamut display? Do you need a wide gamut camera to go capture this wider range of colors that your monitor can now display? Fortunately, the answer is no – unless you want a camera that can capture infrared images! Cameras don’t have the tough task of creating light at specific wavelengths; they only need to filter light, and that’s a much easier task. Even the digital cameras of 10 years were capable of capturing color well beyond what current displays are capable of reproducing.

However, this isn’t necessarily the case with file formats that the camera stores. If you shoot JPEGs, then your camera has to convert the very wide range of color that its sensor is capable of to one of the narrower color spaces that is appropriate for a JPEG. The most common of these is – you guessed it! – sRGB. Any colors in the original image outside what sRGB can represent get mapped to the closest matching color (which may not really be very close at all). Most DSLRs offer the option of recording in a wider color space, like AdobeRGB, but this has many issues of its own, as laid out in this fstoppers article on AdobeRGB vs. sRGB. One issue it doesn’t mention is that trying to represent more colors when you’ve only got 8-bits per channel to do so makes AdobeRGB more likely to result in banding (e.g. in the gradient of a blue sky). The biggest headache, though, is that you’ll likely need to convert the image to sRGB anyways before publishing or sharing it.

And if you’re going to do that, you might as well use RAW for this purpose. I’ve always thought RAW was the right choice, especially for non-photographers who make mistakes that RAW can help recover from. For color, RAW is vastly superior; it captures everything the sensor is capable of detecting, and doesn’t throw information out to produce a JPEG in a narrower sRGB space. Even if you don’t use this now, in 5 or 10 years from now you may see colors you never knew were being captured all along. Today, you’ll still export from RAW into sRGB JPEGs, but if you keep the original RAW images around, then when a wider gamut standard like Rec.2020 finally becomes commonplace even for images, you’ll be one automated re-export away from benefiting.

Color Managed Applications (and Services)

Does everything suddenly get more accurate as soon as you install that shiny new .icc profile? Sadly, not. Only applications that are color-aware will even pay attention to the fact a profile is installed at all. The significant majority of applications ignore it, and continue to crank out sRGB images that will look oversaturated when displayed using the full gamut that a wide gamut display offers. Things are apparently much better on Mac in this regard, but on Windows, just three applications I use have any form of color management:

- Adobe Lightroom. Since this is where I do all my photo editing, it’s really the only application that matters to me. I really do rest easier knowing that I’m not spending hours and hours editing photos solely to make them look better on my monitor. I know given the current state of the world that almost any photo I share will be viewed on an uncalibrated monitor, but since many of the photos I take now are for looking back later, I’m optimistic that this will make a difference on the highly color accurate display technology of the future :).

- Adobe Photoshop. I don’t use this, but it is certainly color aware!

- Web Browsers (partially). Outside of photos and games, virtually 100% of my time is spent in the browser, so in a way this is the only application that matters. Today, Chrome color manages JPEG images that have a color profile attached (but doesn’t manage untagged images, which is most of them). We’re hard at work making this better, so I’m optimistic that soon everything will be accurate! Firefox similar has partial color management. Safari is fully color managed. Surprisingly, both IE and Edge seem to entire ignore output display profile!

A feature of Lightroom I’d never used before is soft-proofing; it’s a feature designed to let you preview what output will look like. When in soft proofing mode, you can specify a target color space, and enable an out-of-gamut warning; this will show any parts of the image with colors that can’t be accurately represented using bright red (or some other color of your choosing). For example, consider this simple shot of Teh O Limau Ais (iced lemon tea at a Malaysia food stall):

The image you’re seeing was already converted to sRGB, as nothing else really works today. But how much of this image was out of gamut? As it turns out, quite a lot:

So, why not exporting JPEGs in a wider color space that preserves captured color at least to the extent that I see it when processing photos on my display? For starters, an 8-bit JPEG is going to produce more banding when used with a wide color gamut; a 10-bit+ image format is really needed to make this practical. But even if that wasn’t an issue, essentially every service – including SmugMug, which I use for hosting photos you see here – converts anything you upload to sRGB. In part, this is because the vast majority of web users have displays that are sRGB at best, and more typically 72% of sRGB. It’s also because web browsers historically didn’t properly handle images in non-sRGB color spaces and would display them washed out. While that’s largely no longer true – Edge, Chrome, Firefox, and Safari all handled ICC-tagged JPEGs – people do sometimes stay on old browser versions for a while.

Summary

So that was long and complex, but I think I’d summarize as follows:

- If you don’t edit photos or do color work, when you’re getting your next monitor, get something that’s 100% sRGB (or use your monitor in sRGB mode), but not wider. Unless you like unrealistically saturated colors, which you may!

- If you edit photos or really want accurate colors, consider a wide gamut display. But be prepared to invest in calibration, figure out which of your apps are color aware, make sure you’re shooting RAW, and have patience until file formats and the web catch up with you. If you’re shooting memories for the long term, it will pay off at least a little!

-

A New Monitor

I’ve long had a thing for lots of usable display space. For about eight years, I used two (and later three) 19″ CRT displays in a multi-monitor setup; this was sufficiently heavy that my desk would curve under the weight. Then, in 2007, a 30″ display I’d been eyeing – the Dell 3007WFP went on sale, and allowed me to replace my aging CRTs with one large LCD panel. I immediately disavowed multi-monitor configurations, significantly preferring a single large display. That monitor served me well for the last 8+ years, but in December I finally swapped it out. For something bigger – the 55″ LG EG9600:

Why the EG9600?

First things first; the EG9600 is not a monitor – it’s a 4K OLED TV that’s designed for your living room, not for your desk. OLED’s claim to fame is its image quality, in particular the black levels it is capable of producing. I’d been looking for a monitor for some time, but the things I wanted – IPS panel, 30″+ screen, 4K resolution, accurate colors with a wider gamut – never seemed to show up in a well-received product. It was also becoming clear that computer monitors were increasingly become a niche item that fewer companies were putting much effort into. I began to entertain the possibility of using a TV in this fashion, and after some research, decided on the EF9600 (flat) or EG9600 (curved). Several factors drove this decision:

- 4K was a fairly obvious requirement (since my prior display was already 2560 x 1600). CE devices sporting the 4K label are typically 3840 x 2160 as opposed to “true” 4K, but close enough.

- The ability to run at 60Hz with no chroma subsampling (aka 4:4:4) was an absolute requirement; many TVs don’t do this, because it’s not necessary for video – but it looks horrible on text. I was able to confirm that others were successfully doing 4K 60Hz 4:4:4 on the EG9600 over HDMI 2.0.

- I wanted a wider color gamut than the 100% sRGB coverage of typical LCDs; the LG OLEDs aren’t class leading but provide a decently wider gamut than sRGB.

- Great overall image and video quality were important, and as of mid-2015, many reviewers seemed to agree that the LG OLED models were class leading in this respect.

Ideally, I’d have liked a 40″ display – large enough to avoid text scaling, but small enough to be practical on my desk and to provide the increased pixel density I’ve gotten used to on the Chromebook Pixel or Retina Macbook Pro. Unfortunately, essentially all higher end TVs sold in North America seem to start at 50″; LG’s OLED lineup was no exception, with 55″ being the smallest display offered. This meant lower pixel density than my existing monitor (80ppi vs 100ppi previously). I decided I could sit a little further back and decided to take the plunge.

I still had to decide on the flat EF9600 vs the curved EG9600; both were priced identically, with no significant feature differences. I’ve made fun of curved screens in the past, joking that they seemed completely useless unless you want to sit right in the middle of the arc – which you’d have to sit two feet away from the screen to do. I never thought that was something I’d actually do, but here I am! Frankly, though, even at this distance I doubt there’s much difference.

Setup and Settings

Currently, 4K 60Hz 4:4:4 on a PC isn’t something you can realistically expect to work out of the box unless you’ve checked a few things. In fact, I’ve still been unable to make this work at all with either a 2014 retina Macbook Pro, or with a Dell XPS 13. Fortunately, neither of those devices is my primary PC! Still, even my desktop required four manual steps to get things to work acceptably:

- HDMI 2.0. Computer displays almost always use DisplayPort 1.2 to support 4K/60. TVs typically don’t support DisplayPort at all; the EG9600 is no exception to this rule with just three HDMI ports. Many laptops have HDMI ports, but even new ones are typically just HDMI 1.4 and thus limited to 30Hz. Even discrete graphics cards that aren’t fairly new will typically lack HDMI 2.0. In my case, I replaced a Nvidia GTX 660 with a newer GTX 980Ti; this provided both HDMI 2.0 and the increased GPU horsepower needed for gaming.

- TV Input Settings. TVs don’t default to the optimal settings for a computer display. Fortunately, a forum post by someone who blazed this trail before I did provided the essential details: in the TV settings menu, enable HDMI Deep Color, change the name of the input to PC, change the icon to a computer, set aspect ratio to “Just Scan”, and use HDMI 1 or 2 (but not HDMI 3 which seems to have a lower bandwidth limit). I also found it helpful to set sharpening to zero, and to disable all processing/dynamic features that were offered (I’ll say more about color later).

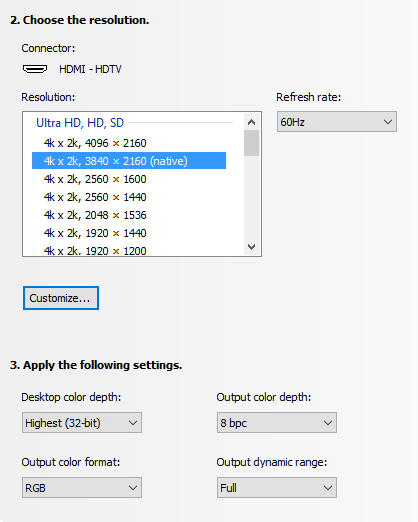

- PC Output Settings. This depends on your graphics card; below is an image from Nvidia’s control panel tool. Two settings are very important – and were incorrect by default! The first is the output color format; RGB is optimal, and settings like YUV420 which are common for TVs will make text look terrible. The second is the output dynamic range, which defaulted to Limited; this is also typical for video. If you’re lucky, you’ll notice right away because blacks are dark grey, and whites are light grey. If you’re unlucky, your TV will detect this, stretch the limited signal from your PC back to full range, and you’ll get reduced image quality forever without noticing this. Nvidia resets this when you update the driver, so check once in a while!

- Prevent Position and Size Changes. This was the toughest issue to solve. Every time I powered the screen on, all my windows were repositioned to the top left of the screen, and shrunk to a fraction of their size, as if the display had cycled through a lower resolution as part of its startup sequence. This was a problem for a long time on Windows 7/8, so there were lots of suggestions including registry edits, but none of them seemed to work. What I discovered by using Chrome Remote Desktop to connect to my machine while the display was off was that the resolution was correct – but the scale factor for text was set to 300% (vs. 100% normally when the display is on). Changing this was ineffective; it’d still reset every time. Going to Control Panel > Appearance and Personalization > Display, clicking on the not-recommended “set a custom scaling level”, and choosing 100% finally fixed this! I also did this (2nd post on snapping), in case the combination is what really corrected things.

Remaining Issues

Although core functionality and some of the bigger initial issues were resolved with the setup and settings above, there’s a number of lingering annoyances for which I’ve found no solution:

- There is no traditional sleep state. My computer turns off the display after a few minutes of activity, the EG9600 detects this as “no input” and shows a corresponding “no input” message for another ~5 minutes before powering off. That’s no big deal; the problem is that it can’t power back on automatically (e.g. when you move your mouse). So every time I sit down, I have to manually power the display on. I’d complain more but I remember how long it took to power on your PC in the Windows 3.1 days :).

- The EG9600 has a non-defeatable (as far as I know) dimming function when it detects that on-screen content is not changing – presumably to prevent persistence (burn-in). The issue is that it’s looking for big changes – so when you’re typing an E-mail or reading a static web page, it gets gradually dimmer until a change is detected at which point you’re hit with full brightness. Bringing up the Alt-Tab switcher occasionally defeats this, but it is pretty annoying. Fortunately, it doesn’t affect photos, videos, or games – just text work.

- There is indeed a little display persistence with static content; you’ll see a lingering version of content you leave up for a long time when switching to a grey screen. In practice, this has been a non-issue for me, and things don’t persist for long once the screen changes.

- Although viewing angles are generally great with OLED, there is a small color shift as you move from side to side; images look warmer when viewing head on, and cooler from the side. This is also more or less a non-issue unless you’re editing photos with someone else or like to swing your head from side to side as you work.

The dimming issue is by far the largest of these; the power issue is a minor inconvenience, and the other issues don’t bother me at all in practice.

Was it worth it?

Pros:

- 4K delivers a nice bump in clarity. Sure, 4K video looks great – and at 2-3 feet away, the added detail is easily visible. But it’s photos where this really shines; for years, every camera and even many smartphones were capturing images at higher-than-4K resolutions – now it’s possible to appreciate more of that detail.

- Colors look great, with the EG9600 supporting a gamut that’s about 88% of DCI-P3 (and a decent bit beyond still-typical sRGB displays).

- Black levels truly are amazing. I can’t see the transition between the screen and the bezel with dark content. I never appreciated the impact this has on overall image quality until I got used to the EG9600, and it’d be really tough to go back.

- Size. 55″ of screen real estate does reduce pixel density, but in my opinion is a net plus. as it lets you run without scaling anything up, providing a massive amount of working area in the process. Lightroom’s entire toolbar pane will often be visible onscreen without scrolling. I can work on things side-by-side and still have a video visible. You can lean in to take a look at something up close, or lean back to appreciate the entire image.

At night without light, blacks are sufficiently black that on some images you can’t see the edges of the screen. This is with no processing of the image from my camera:

Cons:

- Without a modern GPU with HDMI 2.0 support, you’re likely to have a bad time, and be stuck at either 30Hz or with 4:2:0 chroma subsampling.

- A number of TV vs. monitor design differences persist, with the dimming issue being the most annoying in actual use. If you mainly do text work, this could be a deal breaker.

- Size. I mentioned this as a plus above, but any 55″ TV is going to dominate your desk, and for tasks like E-mail or documents, you’ll likely be using a tiny fraction of the available space.

- Cost. Despite significant price drops late last year, OLED is still very expensive; only rationalizing that I spent about 10,000 hours in front of my last display allowed me to remotely justify this.

For me, the LG EG9600 is definitely an improvement over the 30″ Dell that served me well for over 8 years; I love it and I plan to stick with it for quite some time. However, unless you spend a good portion of your time on photos, videos, or games, there’s probably better options for most. If you do go this route, make sure you’ve got HDMI 2.0, a capable GPU, and a willingness to accept the limits of using a TV!

-

More Things I Use

Besides the Sigma 50mm f/1.4 Art I mentioned a few posts ago, and monitor/home theater changes I’ll mention separately in the future, there’s a number of other things I started using over the past year or so. Here’s a few notes on those things!

HIFIMAN HE400S

This purchase was one born entirely from curiosity, as there’s truly nothing wrong with the Sennheiser HD-595 open headphones that I’ve historically used. Indeed, this was a particularly wasteful purchase because I only occasionally use headphones now that my computer is tucked away in the basement, inaudibly far from where anyone might be sleeping. The big draw for me with these particular headphones is that they’re electrostatic, like the Magnepan IIIs I’ve used for a couple of decades now and have been a fan of for music listening. Past electrostatic headphones were uber-expensive ($1000+), so when these came in at the “low” price of $299, I indulged and picked up a pair. I don’t try enough headphones to accurately review how these fare against anything else on the market, but I do prefer them over my 8-year old HD-595s and to my ears, they sound great and are the best I’ve heard in this category. I don’t know I could call a winner between these and my ER-4PT earbuds; the earbuds benefit from blocking out all background noise, but the open circumaural design on the HE-400S is much more comfortable to me for extended listening.

SVS SB12-NSD

I’ve used a full sized subwoofer in my computer audio setup for about 16 years, largely because of a “deal” back in 2000 that wound up with me owning multiple Advent AV-550S subwoofers at a low price. A few years ago, I replaced the one in our home theater setup with an SVS PB12-NSD. While it was a little smaller than the 15″ AV-550S, it was significantly clearer and represented a significant step up in overall quality. This always tempted me to also update my PC setup, but it seemed a little wasteful to get a nicer subwoofer just for a PC setup. However, in late 2015, the SB12-NSD (a sealed version of the PB12-NSD) went on final closeout, having been replaced some time ago by newer models. I was so happy with the PB12-NSD upgrade that I couldn’t pass the chance up!

First off, I was pretty wrong in thinking this wouldn’t make a big difference in my PC setup. In retrospect this is obvious, but because I use smaller bookshelf speakers in my PC setup compared to the HT setup, the sub is actually much more important since it’s actually handling a greater portion of the overall audio spectrum. In any case, the upgrade was very worthwhile; unexpectedly, I actually have a greater tendency to listen to music while working at my desk than before, and it’s a much closer experience to the bigger speakers elsewhere in my home.

I also learned something I wish I’d known earlier about setting phase on a subwoofer, which historically I’d always done via trial & error with no confidence I was making any real difference. A forum post somewhere recommended simply playing an 80Hz test tone (or whatever crossover frequency you wish), using an SPL meter (or your ears) in your main listening position, and tuning phase to maximize amplitude. Subjectively, it feels like that approach worked really well, even with the $17 SPL meter I use.

Canon Pixma Pro-100

I’ve been on the fence about getting a photo printer. It’s generally not necessary, because Costco and others will happily use a high quality printer on whatever you care to send them, and they’re just a few minutes from my home. Two things finally got me to pull the trigger on this. The first was a rebate from Canon that effectively reduced the price of this printer to $200 (half it’s normal price). The second was looking into color gamut, and realizing that the color gamut (range of colors that can be represented) is higher for my camera, this printer, and monitor than it is for the (sRGB) JPEGs that any consumer photo service is willing to accept. In other words, exporting my photos to JPEG and sending them to Costco meant a loss of certain colors that their printers (and now mine) would actually be fully capable of reproducing.

Is this difference visible? I honestly haven’t done enough testing to know yet, but for 50% off, I was willing to experiment. It’s half worth it just to install all the ink cartridges, each of which gets has its own impressive glowing LED once properly installed:

P.S. the LEDs in that picture are a good example of a color that’s out of gamut, which is partially way the image above doesn’t look as nice as the real thing :).

Tamrac JETTY 7

I already have a fairly crazy number of camera bags, each of which seems to serve a different purpose. The Think Tank Digital Holster 40 is great when I’m bringing just one lens; the Lowepro Rezo 180AW worked well with a few smaller lenses (not the f/2.8 zooms) plus a flash but was a bit bulkier to carry around; the Tamrac Rally 7 is still the travel bag I use all the time when I need to bring a 15″ laptop, my D800, and several large lenses. Amazingly, this still left me wanting a bag that was as unintrusive as the Think Tank, but sufficient to carry a pair of smaller lenses. The Tamrac JETTY 7 turned out to fit this bill quite well – it decently holds the D800, a couple of full frame primes (one attached to the body), a small flash (in the front compartment) – and even a tablet, though I never bring one:

I tried this bag mainly because it was on sale at Costco, but I now use it regularly – though amazingly, I do so alongside all three of the other bags mentioned, which still see active use!

Motorola Nexus 6

Valerie completely the destruction of her Nexus 4 about a year ago, so I needed to either get her a new phone, or give her my Nexus 5 and switch to something else. I decided to try the Moto Nexus 6 to see how I felt about the oversized phone thing. I find it… a little oversized. The increased screen real estate is nice, but no amount of getting used to the phone will increase the size of my hand. In any case, there’s plenty of material out there on this and every other phone, and 12 months later it’s old news, so enough said on this!

-

Still Ugly, But Now Cheaper

I’d previously described my system for playing music via Chromecast on legacy equipment that includes amps and speakers from the 80s and 90s, which are highly inefficient with power. A custom ugly box to automatically power my amp on and off was the main missing component of that system, and which has since made it much easier to play music whenever we feel like it.

The main issue with that box, besides its appearance, was the cost of making it – about $125. This was driven largely by the use of the $96 RDL ST-ACR2, which still required a separate external relay, and which mandated the use of a 24v power supply. I’d commented that it must be possible to do build a vastly cheaper yet otherwise equivalent device. Largely out of curiosity about whether I could still do anything beyond plugging in a power cord, I decided to build a much more cost efficient version!

This time, the starting point was a design for an audio-controlled relay that I found via Google search. It provided a relatively straightforward design that seemed quite appropriate; the author of the design was kind enough to describe it’s operation in detail, and to suggest possible modifications. Image and circuit are owned by the author, and link there:

After a rather sad amount of time just refreshing myself to the point of being able to understand the operation of the circuit, I went ahead and purchased the necessary parts. I made two modifications to the circuit; the first was replacing R6 with a 20k variable resistor to adjust sensitivity, and the other was to reduce R11 from 10M to 1M to drop the power-off time from 20 minutes to 2 minutes. Ignoring that several parts only come in larger quantities, and the fact that I decided to replace the $10 Radio Shack soldering iron I’ve had since childhood, this circuit was indeed cheaper by an order of magnitude – and I was able to purchase all necessary parts via Amazon:

- 11 resistors, various values: ~$0.22 (I bought this kit of assorted values)

- 1 20k variable resistor: ~$0.08 (I bought this kit of assorted values)

- 5 capacitors, various values: ~$0.20 (I bought this kit of assorted values)

- 1 LM358N op-amp: $0.35

- 1 2N7000 MOSFET transistor: $0.13

- 2 1N4004 diodes: $0.32

- 1 DC 12v relay (rated for 15A @ 120VAC): $1.46

- 1 4cm x 6cm generic PCB: $0.70

Total cost? $3.46. Of course, the real cost of building just a single item was considerably higher considering that some components came in quantities of 5, 25, or even 100 – but now I’ve got a nice supply of electronic components in case I build something similar in the future. Once everything arrived, it was time to use what I’d learned in high school electronics for the first time in more than two decades. Boy, I’m getting old! The end result was much uglier than the RDL ST-ACR2, but still vastly cheaper:

It’s especially ugly because I should probably have used a proto-board (in which holes are connected vertically and split in the middle, vs. being an unconnected grid), which resulted in the back of the board looking like this:

Now, if I’d assembled the circuit perfectly the first time, I’d have been OK with basically no electronics knowledge – the project would just have required a little soldering, and following instructions! However, I did make at least one mistake (connecting one of the leads of the transistor), which required a few measurements to track down. I’d also naively used the only 100nF capacitor in my kit, despite that it was an electrolytic capacitor (which does not handle AC) and not a ceramic capacitor – so I had to replace this with a pair of 47nF ceramic capacitors in parallel; this eliminated a 0.5v voltage on the audio input terminal! Finally, there was an oscillation issue that caused havoc when I plugged the audio input into my Nexus 5; the screen went crazy and started registering random touch events! This was solved by connecting the negative DC output terminal to ground. This all made me very grateful for the strong electronics program my high school had, and in particular to the teacher that made it much greater than the standards called for – thanks Mr. Straumers!

Sadly, after testing the circuit above, and starting to assemble things into the project box I chose, I discovered another issue; the nice $7.64 12v power supply I’d ordered was too big to fit inside the project box. I didn’t want to switch to a bigger box, especially since it takes some effort with my very poor mechanical skills to make remotely reasonable holes in these hard plastic boxes (I’d already cut the box I had). Finding no smaller alternatives, I finally ordered a $6.25 12v AC adapter which I cut apart on arrival, using just the circuit inside the plastic housing to supply the power needed by the circuit (power PCB is on the left):

The other components you can see above that round out the box are:

- IEC 60320 power connector – $2.46. This box has a real input power connector; the prior one ran a cut-off power cable directly into the box!

- Hammond 1591DSBK project box – $7.95. At 5″ x 3″ x 2″, this box is tiny compared to the one I used previously!

- AC outlet. I used a standard AC receptacle I had lying around from some earlier house work; these can be as little was $0.50, though the flat design I used was more, I think.

- Female RCA jack. I’ve had a bag of these for also a few decades, but I imagine they’re quite inexpensive.

So in total, it cost $20.12 for this second version, over $100 less than the first attempt – though it was quite a bit scarier relying on my own circuit considering that it’s connected to and controls full 120v AC mains power! A couple more pictures of the final result:

The second image shows the box hooked up to a Chromecast, HDMI audio extractor, and the Emotiva Control Freak used instead of a traditional pre-amp. The speakers and power amp are omitted, as I used a lamp to test this with, and will hook it up to an amp/speakers when other family members aren’t fast asleep :).

-

Audio Interoperability

It’s kind of nice that unlike video, audio equipment is largely interoperable even when individual components are from different decades. My main audio setup was a good example of this – I’m using speakers from the 80s (Magnepan MG-IIIs), a power amplifier from the 90s (Musical Design D-140i), a pre-amp (Emotiva USP-1) and one source (Logitech Squeezebox) from the 2000s, and a second source (Chromecast) from last year. By comparison, video went from RF/coax to composite to S-video to component to HDMI in that timeframe, with HDMI itself going through 1.0, 1.1, 1.2, 1.3, 1.3a, 1.3b, 1.3c, 1.4, 1.4a, 1.4b, and now 2.0 revisions – connecting equipment separated by a decade is basically a non-starter.

However, even audio changes with the times, and today I finally made a change that I would have made a year ago if I was less lazy – because getting things to work closer to the way I’d like actually took some custom elements. Here’s what my setup looks like!

Source: Chromecast + ViewHD

Once upon a time, it was necessary to have multiple sources. There were legacy sources – AM/FM tuners, LP players, and cassette tapes. Even today, CD players can remain relevant if you have a large collection of music on CD, plus despite being 25 years old, good CDs sound as good as any digital format. Of course, digital sources now dominate and are vastly more convenient; my Squeezebox was the primary player for my entire collection of ripped CDs.

However, with subscription services like Google Play Music and Spotify, the very idea of music ownership seems to be coming to an end. I subscribe to Play All Access, and since Play allows me to upload my existing collection – which does actually include esoteric game soundtracks that aren’t available directly via Play – I truly no longer need any other sources. The main shortcoming of Play Music was that it was difficult to connect into my audio system.

Fortunately, that changed with Chromecast (though as a reminder, I work on this area, so I’m very biased!). While Chromecast lacks audio outputs, an audio converter like the ViewHD model above ($40) solves this quite easily. If you don’t need SPDIF output or EDID controls, there are devices as low as $20 that seem like they’d work equally well. If I was more worried about the quality of the DAC inside the ViewHD box, I’d use the SPDIF output to connect to a separate DAC – but I’m pretty sure my kids have permanently damaged my hearing anyways.

Output: Magnepan MG-III + Musical Design D-140i

I’m using the same speakers for music that I bought the year I finished school – the Magnepan MG-IIIs. They’re 6 foot tall ribbon speakers, and while they’re not well suited to home theatre, they’re still plenty good enough for my ears on music content (though positioning them optimally is hard these days, and the kids like hiding behind them while murdering my wires). I bought these speakers used in the 90s, from a friend’s uncle who purchased them new in the 80s. They’ve aged much better than the original Nintendo Entertainment System or Sinclair ZX 48K spectrum sitting in boxes nearby from the same era!

The amp, pictured above, is a Musical Design D-140i that I picked up, used, in the late 90s, and have used since. It’s a Class A amp. If you’re not familiar with amp terminology, that might sound like a compliment, but it actually refers to the design of the amp. Perhaps the most pronounced characteristic of Class A amps – now largely obsolete and replaced by newer designs – is their inefficiency. That’s certainly the case here – if the amp is powered up, it sucks a ton of power and gets very warm even if it’s not making a sound.

This fact has been my main dilemma with my current setup. I can’t leave it turned on, and the only switch on the amp is the big mechanical one you see on the face plate. The amp sits nicely inside a cabinet, but I needed to open it up and turn things on every time I played music. It was very easy to forget that it was on after I stopped casting, in which case, a few days later I’d have both a flaming inferno in the cabinet as well as a higher power bill for the month.

Integration: Custom Ugly Box

Remember I said above that I was lazy? It’s because the solution to my Class A power amp problem was actually simple – all I needed was an automated way to turn the amp on whenever music was playing. Complex home automation solutions where I could use some other app to power outlets on and off via some app were out of the question, because my family would never use this; I needed the solution that added no complexity over just pressing the Cast icon in Google Play Music.

Sadly, there were no ready-made solutions to this problem, despite that it seems simple. My power amp wasn’t new enough to have 12v triggers, and 12v triggers would still require me to turn on a preamp. Some newer amps have auto-sensing, but that would mean replacing the D-140i which continued to have good quality and worked very well – plus very few amps actually have auto-sensing. There were current-sensing power bars triggered off another device, but Chromecast draws very small amounts of power, with little difference between idle and active states.

In the end, I had to build the custom box pictured above, which takes a single audio input, and uses it to switch an AC circuit. It should be possible to make a box like this for $10, but being lazy I spent vastly more than this. If I have time, I will try and make a $10 version someday, but that would require brushing up on basics around filters, op-amps, and timers. So what’s inside the box, and what did it cost?

- RDL ST-ACR2. This is an audio controller relay, and is the main component in the system. It cost a whopping $96! It basically uses an audio signal to control a modest relay, with adjustable sensitivity, and a switching delay up to 50 seconds. The delay was key – there were cheaper circuits, but you don’t want your amp power cycling between songs!

- TDK-Lambda LS25-24. This is a 24v DC power supply, which cost $17.50, but was necessitated by the fact that the ST-ACR2 requires 24v power. Like the ST-ACR2, the LS25-24 has nice screw-based terminals that saves a bit of soldering.

- SSR-25DA. This is a 25A relay, which costs only $5, and has a trigger voltage of 3-24VDC. While the relay in the ST-ACR2 claims to be rated for 10A, power amps have a notoriously high current draw when they’re first powered on – I blew the auto-switching circuit in my TA-E9000ES because I didn’t realize this. So I just use the ST-ACR2 to trigger this higher rated relay – plus if this relay blows, it’s $5 to replace instead of $96. This particular relay also has screw terminals; the first one I got was PCB mount, and worked until too much shuffling of components inside the box caused one pin to break from metal fatigue :(.

- Hammond 1591E. This is the black box you see above, which cost $7. Not much more to say about it, other than that my skills with a Dremel (to cut holes out) are severely lacking!

All told, this was a $125 box – more than what four Chromecasts go for Amazon at the moment. That’s the price of forgetting my electronics! Wiring everything up was surprisingly easy and more or less worked the first time. One mistake I made was not connecting the outer terminal of the audio input to both the negative and ground input pins on the ST-ACR2, which caused a loud hum on the affected input channel, but that was easy to remedy. Now, my amp powers up whenever I cast music, and is switched off as soon as the music is over!

“Preamp”: Emotiva Control Freak

There was one last piece to the puzzle, and that’s the pre-amp. My current setup was based on the Emotiva USP-1, which was a nice and not terribly expensive 2-channel analog pre-amp with great build quality. It’s far more modern and efficient than the D-140i, but I would have had to leave it on all the time, because when you first apply AC power, it goes into standby mode regardless of its previous state. Alternatively, I had an older Halfer analog pre-amp, but it had always been flaky in one channel and I’d been unable to repair it.

However, a preamp has a pretty basic set of functions – input switching, impedance matching, and volume control. When you’ve got just one source, as was the case here, you can scratch input switching. Impedance matching might be important especially with a range of sources, but I was betting that a relatively modern device like the ViewHD, despite being cheap, would have reasonable output impedance. So that just leaves volume control!

While some passive preamps seem to think they can still get away with a premium price of over $1000, Emotiva’s approach was far more sensible – a big, high quality potentiometer for $50, with decent RCA unbalanced audio interconnects. With this between my Chromecast/ViewHD and power amp, I have an easy and inexpensive master volume knob – and can use Chromecast’s digital volume control when I’m feeling lazy.

In a perfect world, volume commands sent to Chromecast would turn this knob, but that’s asking the impossible. In the future, a volume-enabled ViewHD with HDMI CEC based volume control – and the matching feature on Chromecast – would give me what I truly want. But until then, I have the big, smooth volume knob above. I just need to make sure to keep it hidden from the kids – because if it was ever turned to maximum, something would break. My ears, at the very least!

-

3-hit Combo

The last post discussed the details of building a new desktop, a definite anachronism in this time when people get more excited about the computing capabilities of watches. While a traditional desktop continues to serve my primary computing needs better than any of the emerging pieces of technology, I recognize that’s because my needs are unique – not because the world has it wrong!

A case in point was a recent visit by my sister-in-law, Aimee. Her previous phone was dead, her netbook was archaic, and the machine in her family home in Taiwan is memorable to me mainly because it will literally shock you if you touch it. Good thing Taiwan uses 110v power, not 220v like many other Asian countries! Did I build her a custom, relatively high end Windows desktop? No, certainly not. Instead, we sent her back home with a smartphone, a laptop, and a desktop – all for a little over $600:

It’s truly incredible to live in a time when this much capability can be had so inexpensively. It wasn’t all that long ago that a single component of your computer – perhaps the CPU, or the memory, or even the hard disk drive if you got something big – could cost as much as all three of the above combined.

Acer C720P (11.6″, Touch, 2GB) – $280

Chromebooks may not yet run Starcraft or Lightroom, but if you live on the web, they provide pretty much everything you need. I got a couple of the original Samsung Chromebooks back in 2011 (one for Valerie, one for my mom), and while they occasionally want a Microsoft Office feature they got used to over a decade or two of use, both machines are still going strong. In fact, they’re more capable than when I got them due to updates, but perhaps most importantly, I haven’t had to provide a bunch of tech support, and I don’t worry that spyware is actively stealing information from them. Of course, I work on the Chrome team, so I’m incredibly biased here :).

The baseline C720 is only $240, and was actually what we’d planned on, but Aimee wanted white, and the only model that shipped immediately in white with the C720P, which adds touch capability for $40. Ironically, this was a blessing in disguise. Touch on a laptop has never appealed to me; you need to take your fingers off the keyboard, and you get fingerprints all over the screen. What I didn’t count on was that touch appealed to Aimee – a lot. In fact, I don’t even think she noticed the difference between Chrome and Windows as much as she noticed the difference between touch and non-touch.

It’d be nice if the 2GB of memory was user-upgradeable to 4GB, but sadly the components are a little too integrated for that. But for Aimee’s use, I doubt this will make a difference.

Asus Chromebox – $180

My father-in-law still primarily uses desktops, and I’m certainly not going to object to that. If you don’t need portability, I feel like there’s no substitute for a full-sized keyboard, a decent mouse, and a reasonably sized monitor that’s big enough to really show whatever content you’re looking at. And while I spent north of $1200 to replace my desktop computer, astonishingly, if you don’t “need” a 3.5GHz quad core processor, or 32GB of memory, or a 512MB SSD, a mere 15% of that cost buys you a full capable Chromebox.

I am also fairly certain that the Chromebox will not administer electric shocks when touched. The fact that it’s case is entirely plastic will likely do a good job of ensuring this.

Unlike the Acer C720P, the RAM in the Asus Chromebox is definitely user upgradeable (though I did not check if this has any warranty implications). The 2GB version shipped with a single stick of RAM and two slots; it was straightforward to add another 2GB for a total of 4GB. The only thing tricky is figuring out that what you need is a 2GB PC3-12800 204-pin SODIMM (though it’s not quite as bad as sorting through near-infinite options for desktop memory at different performance levels).

Motorola Moto G Play Edition (16GB) – $200

During the last few years, the evolution of smartphones was breathtaking and paralleled the earlier PC years, where each year raised the performance bar in such a significant way that you felt compelled to upgrade every couple of years or so. In no small part, both with smartphones and the PCs of long ago, that’s because the experience was massively stifled by hardware capabilities at the time. Where you used to turn your PC on, and then go get a snack while you waited for it to boot, you used to do the same just trying to load a web page on early smartphones (especially before 3G/LTE became prevalent).

The truth is that in 2014, even mid-end phones are now entirely adequate for pretty much anything you want to do (except perhaps for the tiny fraction of smartphone games that will fully utilize a high-end GPU). While Apple, Samsung, and others will undoubtedly continue to push their latest and greatest new models each year, the improvements barely matter anymore. Going from 480 x 800 WVGA screens in 2011 (which were definitely pixelated) to 720p (720 x 1280) “HD” screens in 2012 was breathtaking. By contrast, the transition last year to 1080p “Full HD” flagship phones – giving you the same 1920 x 1080 resolution you’ll probably find on your 50″ HDTV – was mostly unnecessary and not noticeable to many people. The emergence this year of even crazier resolutions, like the 2560 x 1440 resolution on the Galaxy Note 4, is a sign that we’ve clearly gone too far.

Fortunately, that made it possible to pick up a really solid mid-end phone, the Moto G, for a buck less than $200 – unlocked, with no contract, and with a pure Android experience. It’s a great phone that should be a revolutionary upgrade over the HTC myTouch Slide “4G” that it replaces. I’m also pretty happy with the picture I managed to take of it:

While the original budget of $620 for a smartphone, laptop, and desktop replacement was bumped up by $40 for touch and $20 for an additional 2GB of RAM, this trio was still comfortable under $700. I’m still amazed by how much of a steal that feels like.

-

The Mobile Revolution

The tech and mainstream press all seem to agree on the trend towards smaller, mobile devices. Six years ago, laptop sales surpassed desktops. Then, smartphones and apps were the new hotness; then came the iPad, which quickly silenced early doubters as to whether there was room for another form factor between phones and laptops.

Even in my own case, I’d replaced my main desktop PC every three years, pretty much like clockwork. The Pentium 3 based system I’d bought around ~2001 was replaced in 2004 with a 2.4GHz Pentium 4, which served me well until I upgraded to a Core 2 Duo E6400 based system in January 2007, which in turn was my main system until it was replaced with a Core i7-860 in January 2010. I’d stopped building computers from scratch starting with that Pentium 3, because it just wasn’t worth it anymore; the parts cost more than a pre-built, warrantied system from Dell – so all four of the machines I listed above were purchased from Dell, and then customized slightly (often with a better GPU).

As of January, though, my trusty desktop was still serving me relatively well – four years after I’d purchased it. In part, that’s because the mobile focus meant that Intel and others have focused much more on power efficiency than on speed, which hasn’t helped desktops much. A second big factor for me was that the only real gaming I do on PC is Starcraft, which having been originally released in 2010, does not really push a system that hard – even at the native resolution of my 30″ monitor (2560 x 1600). But perhaps most importantly, the majority of computing tasks – interacting with web apps, editing documents, reading E-mail, etc – aren’t too taxing, and are increasingly being designed to run smoothly on low end mobile devices.

Naturally, in denial of the mobile trend – or perhaps as part of a technological mid-life crisis – I decided not just to replace my desktop, but to actually pick each component and assemble the system myself. While assembly is still relatively straightforward, the simplicity of picking between a 3-5 options for each component at Dell was now more like picking a dessert at the Cheesecake factory – there are so many options that as you read the menu you swear you’re seeing the same item multiple times, there just to confuse you. Why did I go this route? In part, because a few pieces of my old system (especially the recently replaced GPU) were entirely adequate; but also because I wanted fewer compromises – plus the kids were away in Asia, so I actually had a little more time.

So what did I pick, and why?

Case: Nanoxia Deep Silence 1. I clearly came out of a very different age in picking this “mid-tower”. In my teens, selling 386-era PCs with my friend Herman, “mid tower” actually meant “smaller than the traditional size”, usually giving up a little internal room along the way. Now, like beverage sizes at McDonalds, “medium” really means pretty large, and “full tower” means gargantuan. I should have looked at actual dimensions ahead of time – as this case is much bigger than what I had, and is more than I actually need.

At $120, this case isn’t cheap – in fact, it’s the most expensive computer case I’ve purchased. But as the reviews promised, it’s very nicely designed, it’s very quiet with internal dampening to keep the noise down, has a built-in fan controller (so you can adjust fan speed, and thus noise, down the levels that you need), and it’s nicely built. It’s also one of few options where you want a quality case with a simple aesthetic – it seems like most cases go for over-the-top visual designs with crazy lights, a faceplace that looks like a robot/demon/dragon/etc, and so forth. Some of it’s noise performance is still defeated by my slightly noisy GPU (which, of course, has fan ports that direct exit the case), but all in all I do like it.

Power Supply: eVGA 500B. $60 was enough to get a 500W power supply with decent reviews and reasonable efficiency. Unless you’re getting a monster GPU, which I wasn’t, 500W is more than enough, and there’s several reasonably rated brands to go with.

CPU: Intel i7-4770K. In past purchases, $300 has been a price point that’s the final point before the price/performance curve gets out of control (the next stop up is often $500-600, and above that you can pay $1,000 for a CPU that’s only marginally better). I definitely don’t need the extra cores or ability to use multiple processors that more expensive CPUs provide, and the i7-4770K provides basically the best single core performance available (at the time I was building) which still helps for gaming. Intel has CPUs that offer 90% of the performance at 2/3rds the price, but given that I expect this desktop to last 3-4 years, the extra $100 was tolerable.

RAM: 32GB (4 x 8GB) G. Skill Ripjaws Z PC3 1866 CL9. Picking RAM is truly crazy. There are a dizzying array of SKUs, with infinitesimal differences between options. I spent way too much time trying to understand the relationship between clock speed (1866Mhz in this case), CAS levels (9 in this case), timings beyond the primary CAS level, to understand what it was worth paying for. This is an area where you can really pay more for nothing – getting higher specs that yield zero performance benefit. For my particular configuration (single processor i7), it seemed like PC3 1866 was the fastest RAM you’d get any real benefit out of; above that, RAM got expensive quickly, with marginal if any benefit. 32GB was still quite a splurge, though; at $340, the RAM was slightly more expensive than the processor – and I’m sure 16GB would have completely sufficed. But now I can survive days when the nightly untested build of Chrome (canary) has memory leaks, and I can laugh when Lightroom eats 5GB and just load Starcraft without quitting it anyways.

Motherboard: MSI Z87M Gaming. There’s a similarly huge number of motherboards for a given type of CPU, but at least this one was easier to whittle down; at $140 the Z87M had what I needed without the extraneous features I didn’t. As a mid-end motherboard, it has the flexibility of adding a second GPU but doesn’t come with the cost of top-end boards that accommodate 3 or 4 GPUs. It’s got a decent onboard Gigabit Ethernet controller, which could be handy, but without the cost of integrated 802.11ac Wi-Fi (which I don’t need, and if I did, would prefer an external adapter anyways). The audio support reviewed decently, though I have an external DAC anyways. Unlike RAM, reviews were more opinionated and this board did reasonably well in reviews.

GPU: Gigabyte GTX 660. I’d previously purchased this GPU, and just moved it over. Picking a GPU is pretty easy; pick your game, what resolution/settings you want to play it at, and there’s usually a clear winner or at most two contenders as the best choice at that point in the price/performance curve. The GTX 660 handles Starcraft 2 at 2560 x 1600 at reasonably high settings, and that’s good enough for me. At least till 4K :).

HDD: Samsung 840 EVO 500GB SSD. Going to an SSD, which I did with my last system, is perhaps the most impactful upgrade you’ll ever feel. At $310, it was certainly a splurge to go for 500GB, and 250GB would probably have been plenty sufficient. However, I’ve spent countless hours trying to stay alive in my prior system, which had a 120GB SSD (which actually cost more than this 500GB drive when I first purchased it!) that was perpetually almost full. This meant compromises, like having to put photos I was processing on a slower traditional HDD. To make sure I don’t have to do the next 4 years, I went with the 500GB option. Samsung’s 840 EVO series had top reviews at the time of purchase, and didn’t cost measurable more than alternatives, so this was another category with a clear winner. I moved a 1GB HDD from my old system over for mass storage; it will be full soon, but quite inexpensive to increase.

Optical: LG BD-ROM / DVD-RW. It’s hard to describe how dumb I felt, when putting together my Windows Home Server box a few years ago, when it came time to install the OS… and I realized I hadn’t bought an optical drive. $50 buys a decent Blu-ray reader / DVD writer combo drive, so this was a straightforward choice.

Operating System: Windows 8.1 Professional. I considered sticking with Windows 7, after several experiences with bad Windows 8 machines, but Windows 8.1 is tolerable overall. I just pretend it’s Windows 7, and hit Win+D anytime I accidentally launch a non-desktop app. Even the start menu is tolerable – just close your eyes, type the name of the program you want, and that’s usually the extent of your interaction with the new Windows UI. Which is really important, because Windows 8.1 was designed to seamlessly bridge your 8-10″ tablet with your 14″ laptop – and so the design, for me, utterly fails on a 30″ monitor. No, I did not get this big monitor to run tablet apps full screen with fat touchable fonts! My friend George, who works at Microsoft, was kind enough to give me the Pro version of Win 8.1, which was very nice!

The pieces I wasn’t stealing out of my existing computer:

Given the adequacy of my old PC, why would I spent ~$1,200 building this new and somewhat indulgent machine? In a word, Lightroom. While other apps weren’t blazing fast, I was spending a lot of time in Lightroom, and a significant chunk of that time was waiting for it to respond. In part, it’s because Lightroom “upgrades” have, over the years, given it more capability – but at the cost of speed; Lightroom 2 was fast! But more significantly, moving to the 36MP Nikon D800, with it’s 40-50MB RAW files, really had a speed impact. With it approaching 10 seconds to load an image, and ~10,000 images shot per year (that I need to load once even if I’m going to delete), I was literally waiting a full day a year for Lightroom to respond. Granted, no computer makes it go infinitely fast, but I figured even if I saved ~3-5 seconds an image, it’d be worth it.

Did this pay off? Somewhat. Lightroom is certainly faster with all my RAW files on SSD, and with the faster processor, and the increased speed reduces frustration for sure. But I’m still looking forward to Google+ Photos Auto Enhance getting to the point where manually selecting and tweaking things becomes entirely unnecessary! Until then, I suspect this desktop will more than meet my needs.

-

Things I Use: ER-4PT, Qi Charger

Lack of time, or some excuse resembling this, has translated into a total lack of recent updates – though I’ve been accumulating a backlog of sorts to post about. First, a couple of things I started using back around the start of the year!

Etymotic ER-4PT Earbuds

Alas, on a trip late last year, the set of earbuds I was using at the time – Ultimate Ears super.fi 5 Pro – failed to make the final connection from Vancouver to Seattle, and as a result, were probably unceremoniously tossed out along with the usual seatback pocket trash. Which is unfortunate, since they weren’t particularly inexpensive, nor were they that old (they served me for a little over 5 years, with a replacement cord less than a year ago). After I didn’t get a response (as expected) to a Lost-and-Found filing with Air Canada, I found myself unwillingly in the market for a new pair of earbuds.

The UEs were actually the first earbuds I ever purchased. I prefer big speakers over small speakers, small speakers over headphones, and headphones over earbuds. However, my headphones are entirely impractical for travel since they’re an open design that allows sound to pass both in and out; good for Olivia’s early days – or should I say, sleepless nights requiring a rapid response – but not so good for the constant noise and close proximity to others of an airplane. And with almost a half million miles of travel during the period of time that I owned the UEs, the airplane scenario was all too common. While I travel far less frequently than I did previously, with two trips to Asia on the calendar for early 2014, I definitely didn’t want to be stuck with airplane headphones that can now miraculously be manufactured for perhaps 20 cents – with sound quality to match.

So, I went through the same routine that led me to the now-discontinued UE earbuds I’d purchased previously – going through pretty much all the reviews and comparisons I could find in what I thought was a “reasonable” price range. Earbuds are like anything else in audio: you can spend a near-infinite amount for increasingly infinitesimal gains in sound quality, and while I care about sound quality, I’m not an audiophile with golden ears that detect even the faintest of flaws in sound reproduction. Neither, I suspect, are most of those who purchase $1,000 headphones, but that’s another matter. At $299, the ER-4PT was definitely more than I was looking to spend, but it didn’t take long for my weak brain to rationalize the purchase with exaggerated images of how much nicer the flight would be.

As expected, for that price, the ER-4PTs came with a whole array of useless packaging and other material, which I assume is designed to convince me that I didn’t spend too much for the small improvements over a $50 pair of earbuds:

That box was literally a box within a box within a box – which as expected, I haven’t opened or touched since taking out headphones out. I’m guessing consumer product makers have done their research, and shown that all this superfluous stuff makes the purchaser feel better about the premium that they paid, though somehow on me it has the opposite effect and makes me wonder why I got something so wasteful.

Inside came paperwork assuring me that the flat frequency response of the headphones was manually tested, by a nice person known only as “K.M.”:

The bump in the frequency response, reassuringly, comes from having to correct for the natural frequency response of the ear. While this is helpfully explained in the material that accompanies the ER-4PTs, this fact seems to undermine the point of the graph; it’s one thing to look at the frequency response for a speaker, or the MTF graph for a lens, where it’s well accepted that you want it to be as close to a straight line as possible. It’s quite another thing to look at the graph above and know whether it’s the perfect reciprocal for the frequency response of the human ear canal. They could have literally printed anything and I’d be taking their word for it.

So, how’d the ER-4PTs acutally perform? Ignoring the fact that pretty much anyone spending a bunch of money on audio gear is extremely biased towards thinking that it sounds great, I was actually pleasantly surprised by the ER-4PTs. While I primarily drive it with non-audiophile devices – my Nexus 5 and 2013 Nexus 7, usually – I’ve now spent a lot of time with these earbuds and they surprise me in a way that the UEs never did. I can sometimes be listening to a random assortment of tracks on a plane, but when it switches to really well mastered track with clear vocals – often Norah Jones, or Diana Krall, or something along those lines – it’s actually stunning how clear and pleasant the track is. Perhaps more importantly, the rather odd looking earbud design, which looks like a stack of three earbuds of increasing size, is much easier to insert and wear for an extended period of time in such a way that you’re getting the best that the headphones can offer. I tried all the tips that came with the UEs, and while I was eventually able to fiddle things into place, it took much more effort, and was far less consistent. I still don’t really like the feel of anything in my ears, but on a ~10 hour flight back from Korea – my first trip with these earbuds – I just listened to music from start to finish, and fully convinced myself that going for the ER-4PTs was not, in fact, folly.

Qi Wireless Charger

Charging your phone by plugging a micro-USB cable into a small, flimsy port on your phone designed for this purpose – and doing this, say, 700 times over the seemingly short two year lifecycle of a modern smartphone is practically begging for something to go wrong. Indeed, my Galaxy Nexus had to go back to Samsung for an issue with the charging port, and Valerie managed to get her phone into a flaky state where the USB charging pins looked a little questionable. So it really seems like an investment in a wireless charger that spares your devices from the daily connection & disconnection is a good idea. Paying ~$50+ for some name brand seemed unnecessary, but $20-25 for a generic model seems perfectly fine. I got a pair of Ruix units, but some OEM lets anyone make and sell these units under their own brand.

There’s really not much else to say, except that there may be compatibility issues with older devices (I had to get a specific model for Valerie’s Nexus 4, and it’s a bit spotty), and that you need to be careful with positioning so you don’t wind up with a dead phone in the morning. But compared to losing my Galaxy Nexus for a couple of weeks of warranty service, and having to completely wipe it, this is a purchase I feel is well worth it.

-

More Things I Use

One of the things I started this blog for was to share the things I use and why – in large part so that I’d have a record one day of all the things I’d forgotten I’d even gone through. Since my memory isn’t getting any better, now is as good a time as any to provide a quick update on a few new items this year.

Google Nexus 5

When I received the Galaxy Nexus a couple of years ago, it was a big step up from the HTC Sensation I’d purchased just three months prior – and that phone in turn was already vastly better than the Blackberries I’d relied on for a number of years before that. I was comfortable enough with it to pass on upgrading last year, but the Nexus 5 was tempting enough that I ordered it shortly after it was announced.

There’s 1001 qualified reviews of the Nexus 5, so I can’t say much that has much value. It’s a great phone at any price, but for $349 it’s almost half as much as other top end models while seemingly sacrificing very little. Even if the phone cost as much as the top-end Samsung or HTC flagship devices, I’d probably still go with the Nexus 5 simply to have the latest, clean Android release (though I do have work-related reasons for wanting this). A few other notes:

- The screen looks great, though I strongly doubt that I can tell the difference between 1920 x 1080 and 1280 x 720 on a screen this size. Seriously, even when I hold the phone so close that it’s at the minimum focus distance of my eye, I can’t come close to seeing individual pixels.

- There were some initial issues with the camera, but as far as I’m concerned, it’s a step up from the Galaxy Nexus (which was mediocre), it’s quite decent with the current updates to Kitkat, and I’m really impressed with the HDR+ mode. Despite the name, it doesn’t produce over-saturated, unrealistic HDR images; it really just takes a bunch of shots and combines them. At the same time, it hasn’t at all reduced my inclination to bring the D800 when I think I’ll want pictures.

- I went with the 32GB model, and I’m glad I did, because SmugMug’s Android app downloads screen-sized downscaled images to a local cache; for me, these 1080p images take up 16GB of my storage on their own. I find this well worth it to have an entire lifetime of photos available instantly with or without a connection.

- Feel-wise, I slightly prefer the Galaxy Nexus, and I do find the ceramic buttons have a bit more wiggle than I’d like, but it’s still comfortable to use.